Abstract

Video sequences exhibit significant nuisance variations (undesired effects) of speed of actions, temporal locations, and subjects’ poses, leading to temporal-viewpoint misalignment when comparing two sets of frames or evaluating the similarity of two sequences. Thus, we propose Joint tEmporal and cAmera viewpoiNt alIgnmEnt (JEANIE) for sequence pairs. In particular, we focus on 3D skeleton sequences whose camera and subjects’ poses can be easily manipulated in 3D. We evaluate JEANIE on skeletal Few-shot Action Recognition (FSAR), where matching well temporal blocks (temporal chunks that make up a sequence) of support-query sequence pairs (by factoring out nuisance variations) is essential due to limited samples of novel classes. Given a query sequence, we create its several views by simulating several camera locations. For a support sequence, we match it with view-simulated query sequences, as in the popular Dynamic Time Warping (DTW). Specifically, each support temporal block can be matched to the query temporal block with the same or adjacent (next) temporal index, and adjacent camera views to achieve joint local temporal-viewpoint warping. JEANIE selects the smallest distance among matching paths with different temporal-viewpoint warping patterns, an advantage over DTW which only performs temporal alignment. We also propose an unsupervised FSAR akin to clustering of sequences with JEANIE as a distance measure. JEANIE achieves state-of-the-art results on NTU-60, NTU-120, Kinetics-skeleton and UWA3D Multiview Activity II on supervised and unsupervised FSAR, and their meta-learning inspired fusion.

Similar content being viewed by others

Avoid common mistakes on your manuscript.

1 Introduction

Action recognition is a key topic in computer vision, with applications in video surveillance (Wang, 2017; Wang et al., 2019b, 2024b), human-computer interaction, sport analysis and robotics. Many pipelines (Tran et al., 2015; Feichtenhofer et al., 2016, 2017; Carreira & Zisserman, 2017; Koniusz et al., 2013; Lin et al., 2018; Wang et al., 2020b; Koniusz et al., 2022; Wang & Koniusz, 2024; Wang et al., 2021; Wang, 2023; Rahman et al., 2023; Zhang et al., 2024; Wang et al., 2024b; Li et al., 2023a) perform (action) classification given a large amount of labeled training data. However, manually labeling videos for 3D skeleton sequences is laborious, and such pipelines need to be retrained or finetuned for new class concepts. Popular action recognition networks such as the two-stream neural network (Feichtenhofer et al., 2016, 2017; Wang et al., 2017) and 3D Convolutional Neural Network (3D CNN) (Tran et al., 2015; Carreira & Zisserman, 2017) aggregate frame-wise and temporal block representations, respectively. However, such networks are trained on large-scale datasets such as Kinetics (Carreira & Zisserman, 2017; Wang et al., 2019c; Wang & Koniusz, 2021; Wang et al., 2024a) under a fixed set of training classes.

Thus, there exists a growing interest in devising effective Few-shot Learning (FSL) models for action recognition, termed Few-shot Action Recognition (FSAR), that rapidly adapt to novel classes given few training samples (Mishra et al., 2018; Xu et al., 2018; Guo et al., 2018; Dwivedi et al., 2019; Zhang et al., 2020b; Cao et al., 2020; Wang & Koniusz, 2022b). FSAR models are scarce due to the volumetric nature of videos and large intra-class variations.

Skeletal FSAR (simplified overview) takes episodes of query and support sequences, splits them into temporal blocks (\({\textbf{X}}_1,...,{\textbf{X}}_\tau \) and \({\textbf{X}}'_1,...,{\textbf{X}}'_\tau \)), passes them to the Encoding Network to obtain features \(\varvec{\Psi }=[\varvec{\psi }_1,...,\varvec{\psi }_\tau ]\) and \(\varvec{\Psi }'=[\varvec{\psi }'_1,...,\varvec{\psi }'_{\tau '}]\), and the Comparator which typically uses some distance measure \(d(\cdot ,\cdot )\), regularization \(\Omega \) and the similarity classifier \(\ell (\cdot ,\cdot )\)

In contrast, FSL for image recognition has been widely studied (Miller et al., 2000; Li et al., 2002; Fink, 2005; Bart & Ullman, 2005; Fei-Fei et al., 2006; Lake et al., 2011) including contemporary CNN-based FSL methods (Koch et al., 2015; Vinyals et al., 2016; Snell et al., 2017; Finn et al., 2017; Sung et al., 2018; Zhang & Koniusz, 2019) which use meta-learning, prototype-based learning or feature representation learning. Just in 2020–2024, many FSL methods (Guo et al., 2020; Dvornik et al., 2020; Wang et al., 2020a; Lichtenstein et al., 2020; Luo et al., 2021; Fei et al., 2020; Guan et al., 2020; Li et al., 2020; Elsken et al., 2020; Cao et al., 2020; Tang et al., 2020; Koniusz & Zhang, 2022; Zhang et al., 2022a; Zhu & Koniusz, 2022; Lu & Koniusz, 2022; Zhu & Koniusz, 2023b; Kang et al., 2023; Shi et al., 2024; Zhang et al., 2021, 2020b, 2022c, b; Lu & Koniusz, 2024) have been dedicated to image classification or detection. In contrast, in this paper, we aim at advancing few-shot action recognition of articulated set of connected 3D body joints, simply put, skeletal FSAR.

With the exception of very recent models (Liu et al., 2017, 2019; Memmesheimer et al., 2021, 2022; Ma et al., 2022; Wang & Koniusz, 2022b; Zhu et al., 2023b), FSAR approaches that learn from skeleton-based 3D body joints are scarce. The above situation prevails despite action recognition from articulated sets of connected body joints, expressed as 3D coordinates, does offer a number of advantages over videos such as (i) the lack of the background clutter, (ii) the volume of data being several orders of magnitude smaller, and (iii) the 3D geometric manipulations of skeletal sequences being algorithm-friendly.

Video sequences may be captured under varying camera poses where subjects may follow different trajectories resulting in subjects’ pose variations. Variations of action speed, location, and motion dynamics are also common. Yet, FSAR has to learn and infer similarity between support-query sequence pairs under the limited number of samples of novel classes. Thus, a good measure of similarity between support-query sequence pairs has to factor out the above variations. To this end, we propose a FSAR model that learns on skeleton-based 3D body joints via Joint tEmporal and cAmera viewpoiNt alIgnmEnt (JEANIE). We focus on 3D skeleton sequences as camera/subject’s pose can be easily altered in 3D by the use of projective camera geometry.

JEANIE achieves good matching of queries with support sequences by simultaneously modeling the optimal (i) temporal and (ii) viewpoint alignments. To this end, we build on soft-DTW (Cuturi & Blondel, 2017), a differentiable variant of Dynamic Time Warping (DTW) (Cuturi, 2011) (Fig. 5 is an overview how DTW differs from the Euclidean distance). Given a query sequence, we create its several views by simulating several camera locations. For a support sequence, we can match it with view-simulated query sequences as in DTW. Specifically, with the goal of computing optimal distance, each support temporal blockFootnote 1 can be matched to the query temporal block with the same temporal block index or neighbouring temporal block index to perform a local time warping step. However, we simultaneously also let each support temporal block match across adjacent camera views of the query temporal block to achieve camera viewpoint warping. Multiple alignment patterns of query and support blocks result in multiple paths across temporal and viewpoint modes. Thus, each path represents a matching plan describing between which support-query block pairs the feature distances are evaluated and aggregated. By the use of soft-minimum, the path with the minimum aggregated distance is selected as the output of JEANIE. Thus, while DTW provides optimal temporal alignment of support-query sequence pairs, JEANIE simultaneously provides the optimal joint temporal-viewpoint alignment.

To facilitate the viewpoint alignment in JEANIE, we use easy 3D geometric operations. Specifically, we obtain skeletons under several viewpoints by rotating skeletons (zero-centered by hip) via Euler angles https://en.wikipedia.org/wiki/Euler_angles, or generating skeleton locations given simulated camera positions, according to the algebra of stereo projections http://www.cse.psu.edu/~rtc12/CSE486/lecture12.pdf.

We note that view-adaptive models for action recognition do exist. View Adaptive Recurrent Neural Network (Zhang et al., 2017, 2019) is a classification model equipped with a view-adaptive subnetwork that contains the rotation/translation switches within its RNN backbone and the main LSTM-based network. Temporal Segment Network (Wang et al., 2019e) models long-range temporal structures with a new segment-based sampling and aggregation module. However, such pipelines require a large number of training samples with varying viewpoints and temporal shifts to learn a robust model. Their limitations become evident when a network trained under a fixed set of action classes has to be adapted to samples of novel classes. Our JEANIE does not suffer from such a limitation.

Figure 1 is a simplified overview of our pipeline which can serve as a template for baseline FSAR. It shows that our pipeline consists of an MLP which takes neighboring frames forming a temporal block. Each sequence consists of several such temporal blocks. However, as in Fig. 2, we sample desired Euler rotations or simulated camera viewpoints, generate multiple skeleton views, and pass them to the MLP to get block-wise feature maps fed into a Graph Neural Network (GNN) (Kipf amb Welling, 2017; Sun et al., 2019; Wu et al., 2019; Klicpera et al., 2019; Wang et al., 2019d; Zhu & Koniusz, 2021; Zhang et al., 2023a, b). We mainly use a linear S\(^2\)GC (Zhu & Koniusz, 2021; Zhu et al., 2021; Zhu & Koniusz, 2023a; Wang et al., 2023a), with an optional transformer (Dosovitskiy et al., 2020), and an FC layer to obtain block feature vectors passed to JEANIE whose output distance measurements flow into our similarity classifier. Figure 3 is a detailed overview of our supervised FSAR pipeline.

Note that JEANIE can be thought of as a kernel in Reproducing Kernel Hilbert Spaces (RKHS) (Smola & Kondor, 2003) based on Optimal Transport (Villani, 2009) with a specific temporal-viewpoint transportation plan. As kernels capture the similarity of sample pairs instead of modeling class labels, they are a natural choice for FSL and FSAR problems.

In this paper, we extend our supervised FSAR model (Wang & Koniusz, 2022a) by introducing an unsupervised FSAR model, and a fusion of both supervised and unsupervised models. Our rationale for an unsupervised FSAR extension is to demonstrate that the invariance properties of JEANIE (dealing with temporal and viewpoint variations) help naturally match sequences of the same class without the use of additional knowledge (class labels). Such a setting demonstrates that JEANIE is able to limit intra-class variations (temporal and viewpoint variations) facilitating unsupervised matching of sequences.

For unsupervised FSAR, JEANIE is used as a distance measure in the feature reconstruction term of dictionary learning and feature coding steps. Features of the temporal blocks are projected into such a dictionary space and the projection codes representing sequences are used for similarity measure between support-query sequences. This idea is similar to clustering training sequences into k-means clusters (Csurka et al., 2004) to form a dictionary. Then the assignments of test query sequences to such a dictionary can reveal their class labels based on labeled test support sequence falling into the same cluster. However, even with JEANIE used as a distance measure, one-hot assignments resulting from k-means are suboptimal. Thus, we investigate more recent soft assignment (Bilmes, 1998; Gemert et al., 2008; Koniusz & Mikolajczyk, 2011; Liu et al., 2011) and sparse coding approaches (Lee et al., 2006; Yang et al., 2009).

Finally, we also introduce a simple fusion of supervised and unsupervised FSAR by alignment of supervised and unsupervised FSAR features or by MAML-inspired (Finn et al., 2017) fusion of unsupervised and supervised FSAR losses in the so-called inner and outer loop, respectively.

Our 3D skeleton-based FSAR with JEANIE. Frames from a query sequence and a support sequence are split into short-term temporal blocks \({\textbf{X}}_1,...,{\textbf{X}}_{\tau }\) and \({\textbf{X}}'_1,...,{\textbf{X}}'_{\tau '}\) of length M given stride S. Subsequently, we generate (i) multiple rotations by \((\varDelta \theta _x,\varDelta \theta _y)\) of each query skeleton by either Euler angles (baseline approach) or (ii) simulated camera views (gray cameras) by camera shifts \((\varDelta \theta _{az},\varDelta \theta _{alt})\) w.r.t. the assumed average camera location (black camera). We pass all skeletons via Encoding Network (with an optional transformer) to obtain feature tensors \(\varvec{\varPsi }\) and \(\varvec{\varPsi }'\), which are directed to JEANIE. We note that the temporal-viewpoint alignment takes place in 4D space (we show a 3D case with three views: \(-30^\circ , 0^\circ , 30^\circ \)). Temporally-wise, JEANIE starts from the same \(t\!=\!(1,1)\) and finishes at \(t\!=\!(\tau ,\tau ')\) (as in DTW). Viewpoint-wise, JEANIE starts from every possible camera shift \(\varDelta \theta \in \{-30^\circ , 0^\circ , 30^\circ \}\) (we do not know the true correct pose) and finishes at one of possible camera shifts. At each step, the path may move by no more than \((\pm \!\varDelta \theta _{az},\pm \!\varDelta \theta _{alt})\) to prevent erroneous alignments. Finally, SoftMin picks up the smallest distance (Color figure online)

Below are our contributions:

-

i.

We propose JEANIE that performs the joint alignment of temporal blocks and simulated camera viewpoints of 3D skeletons between support-query sequences to select the optimal alignment path which realizes joint temporal (time) and viewpoint warping. We evaluate JEANIE on skeletal few-shot action recognition, where matching correctly support and query sequence pairs (by factoring out nuisance variations) is essential due to limited samples representing novel classes.

-

ii.

To simulate different camera locations for 3D skeleton sequences, we consider rotating them (1) by Euler angles within a specified range along axes, or (2) towards the simulated camera locations based on the algebra of stereo projection.

-

iii.

We propose unsupervised FSAR where JEANIE is used as a distance measure in the feature reconstruction term of dictionary learning and coding steps (we investigate several such coders). We use projection codes to represent sequences. Moreover, we also introduce an effective fusion of both supervised and unsupervised FSAR models by unsupervised and supervised feature alignment term or MAML-inspired fusion of unsupervised and supervised FSAR losses.

-

iv.

As minor contributions, we investigate different GNN backbones (combined with an optional transformer), as well as the optimal temporal size and stride for temporal blocks encoded by a simple 3-layer MLP unit before forwarding them to GNN. We also propose a simple similarity-based loss encouraging the alignment of within-class sequences and preventing the alignment of between-class sequences.

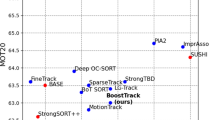

We achieve state-of-the-art results on few-shot action recognition on large-scale NTU-60 (Shahroudy et al., 2016), NTU-120 (Liu et al., 2019), Kine- tics-skeleton (Yan et al., 2018), and UWA3D Multiview Activity II (Rahmani et al., 2016).

2 Related Works

Below, we describe 3D skeleton-based AR, FSAR approaches, and Graph Neural Networks.

Action recognition (3D skeletons). 3D skeleton-based action recognition pipelines often use GCNs (Kipf amb Welling, 2017), e.g., spatio-temporal GCN (ST-GCN) (Yan et al., 2018), Attention enhanced Graph Convolutional LSTM network (AGC-LSTM) (Si et al., 2019), Actional-Structural GCN (AS-GCN) (Li et al., 2019), Dynamic Directed GCN (DDGCN) (Korban & Li, 2020), Decoupling GCN with DropGraph module (Cheng et al., 2020b), Shift-GCN (Cheng et al., 2020a), Semantics-Guided Neural Networks (SGN) (Zhang et al., 2020a), AdaSGN (Shi et al., 2021), Context Aware GCN (CA-GCN) (Zhang et al., 2020a), Channel-wise Topology Refinement Graph Convolution Network (CTR-GCN) (Chen et al., 2021), Efficient GCN (Song et al., 2022) and Disentangling and Unifying Graph Convolutions (Liu et al., 2020). As ST-GCN applies convolution along links between body joints, structurally distant joints, which may cover key patterns of actions, are largely ignored. While GCN can be applied to a fully-connected graph to capture complex interactions of body joints, groups of nodes across space/time can be captured with tensors (Koniusz et al., 2016, 2022), semi-dynamic hypergraph neural networks (Liu et al., 2020), hypergraph GNN (Hao et al., 2021), angular features (Qin et al., 2022b), Higher-order Transformer (HoT) (Kim et al., 2021) and Multi-order Multi-mode Transformer (3Mformer) (Wang & Koniusz, 2023). PoF2I (Huynh-The et al., 2020) transforms pose features into pixels. Recently, Koopman pooling (Wang et al., 2023b), an auxiliary feature refinement head (Zhou et al., 2023), a Spatial-Temporal Mesh Transformer (STMT) (Zhu et al., 2023a), Strengthening Skeletal Recognizers (Qin et al., 2022a), and a Skeleton Cloud Colorization (Yang et al., 2023a) have been proposed for 3D skeleton-based AR.

However, such models rely on large-scale datasets to train large numbers of parameters, and cannot be adapted with ease to novel class concepts whereas FSAR can.

FSAR (videos). Approaches (Mishra et al., 2018; Guo et al., 2018; Xu et al., 2018) use a generative model, graph matching on 3D coordinates and dilated networks, respectively. Approach (Zhu & Yang, 2018) uses a compound memory network. ProtoGAN (Dwivedi et al., 2019) generates action prototypes. Recent FSAR model (Zhang et al., 2020b) uses permutation-invariant attention and second-order aggregation of temporal video blocks, whereas approach (Cao et al., 2020) proposes a modified temporal alignment for query-support pairs via DTW. Recent video FSAR models include a mixed-supervised hierarchical contrastive learning (HCL) (Zheng et al., 2022), Compound Prototype Matching (Huang et al., 2022), Spatio-temporal Relation Modeling (Thatipelli et al., 2022), motion-augmented long-short contrastive learning (MoLo) (Wang et al., 2023c) and Active Multimodal Few-shot Action Recognition (AMFAR) framework (Wanyan et al., 2023).

FSAR (3D skeletons). Few FSAR models use 3D skeletons (Liu et al., 2017, 2019; Memmesheimer et al., 2021, 2022; Yang et al., 2023b). Global Context-Aware Attention LSTM (Liu et al., 2017) focuses on informative joints. Action-Part Semantic Relevance-aware (APSR) model (Liu et al., 2019) uses semantic relevance among each body part and action class at the distributed word embedding level. Signal Level Deep Metric Learning (DML) (Memmesheimer et al., 2021) and Skeleton-DML (Memmesheimer et al., 2022) encode signals as images, extract CNN features and use multi-similarity miner loss. New skeletal FSAR includes Disentangled and Adaptive Spatial-Temporal Matching (DASTM) (Ma et al., 2022), Adaptive Local-Component-Aware Graph Convolutional Network (ALCA-GCN) (Zhu et al., 2023b) and uncertainty-DTW (Wang & Koniusz, 2022b).

In contrast, we use temporal blocks of skeleton sequences encoded by GNNs under multiple simulated camera viewpoints to jointly apply temporal and viewpoint alignment of query-support sequences to factor out nuisance variability.

Graph Neural Networks. GNNs modified to act on the specific structure of 3D skeletal data are very popular in action recognition, as detailed in “Action recognition (3D skeletons)” at the beginning of Sect. 2. In this paper, we leverage standard GNNs due to their good ability to represent graph-structured data. GCN (Kipf amb Welling, 2017) applies graph convolution in the spectral domain, and enjoys the depth-efficiency when stacking multiple layers due to non-linearities. However, depth-efficiency extends the runtime due to backpropagation through consecutive layers. In contrast, a very recent family of so-called spectral filters do not require depth-efficiency but apply filters based on heat diffusion on graph adjacency matrix. As a result, these are fast linear models as learnable weights act on filtered node representations. Unlike general GNNs, SGC (Wu et al., 2019), APPNP (Klicpera et al., 2019) and S\(^2\)GC (Zhu & Koniusz, 2021) are three such linear models which we investigate for the backbone, followed by an optional transformer, and an FC layer.

Transformers in action recognition. Transformers have become popular in action recognition (Plizzari et al., 2021; Zhang et al., 2021a, b; Girdhar et al., 2019; Plizzari et al., 2020). Vision Transformer (ViT) (Dosovitskiy et al., 2020) is the first transformer model for image classification but transformers find application even in recent pre-training models (Haghighat et al., 2024). The success of transformers relies on their ability to establish exhaustive attention among visual tokens. Recent transformer-based AR models include Uncertainty-Guided Probabilistic Transformer (UGPT) (Guo et al., 2022), Recurrent Vision Transformer (RViT) (Yang et al., 2022), Spatio-TemporAl cRoss (STAR)-transformer (Ahn et al., 2023), DirecFormer (Truong et al., 2022), Spatial-Temporal Mesh Transformer (STMT) (Zhu et al., 2023a), Semi-Supervised Video Transformer (SVFormer) (Xing et al., 2023) and Multi-order Multi-mode Transformer (3Mformer) (Wang et al., 2023c).

In this work, we apply a simple optional transformer block with few layers following GNN to capture better block-level dependencies of 3D human body joints.

Multi-view action recognition. Multi-modal sensors enable multi-view action recognition (Wang et al., 2020b; Zhang et al., 2017). A Generative Multi-View Action Recognition framework (Wang et al., 2019a) integrates RGB and depth data by View Correlation Discovery Network while Synthetic Humans (Varol et al., 2021) generates synthetic training data to improve generalization to unseen viewpoints. Some works use multiple views of the subject (Shahroudy et al., 2016; Liu et al., 2019; Zhang et al., 2019; Wang et al., 2019a) to overcome the viewpoint variations for action recognition. Recently, a supervised contrastive learning framework (Shah et al., 2023) for multi-view was introduced.

In contrast, our JEANIE performs jointly the temporal and simulated viewpoint alignment in an end-to-end FSAR setting. This is a novel paradigm based on improving the notion of similarity between sequences of support-query pair rather than learning class concepts.

3 Approach

(top) In viewpoint-invariant learning, the distance between query features \(\varvec{\varPsi }\) and support features \(\varvec{\varPsi }'\) has to be computed. The blue arrow indicates that trajectories of both actions need alignment. (bottom) In real life, subject’s 3D body joints deviate from one ideal trajectory, and so advanced viewpoint alignment strategy is needed (Color figure online)

Euclidean dist. vs. DTW. (top) Feature vectors \(\varvec{\psi }_t\) and \(\varvec{\psi }'_{t}\) of query and support frames (or temp. blocks) are matched along time t: \(d_{Euclid}(\varvec{\Psi },\varvec{\Psi }')\!=\!\sum _t d^2(\varvec{\psi }_t, \varvec{\psi }'_{t})\). (bottom) For DTW, a path with minimum aggregated distance is selected as \(d_{DTW}(\varvec{\Psi },\varvec{\Psi }')\!=\!\sum _t d^2(\varvec{\psi }_{m(t)}, \varvec{\psi }'_{n(t)})\), and m(t) and n(t) parameterize query and support indexes. One is permitted steps \(\downarrow \), \(\searrow \), \(\rightarrow \) in the graph. We expect \(d_{DTW}\le d_{Euclid}\)

To learn similarity and dissimilarity between pairs of sequences of 3D body joints representing query and support samples from episodes, our goal is to find a joint viewpoint-temporal alignment of query and support, and minimize or maximize the matching distance \(d_\text {JEANIE}\) (end-to-end setting) for same or different support-query labels, respectively. Figure 4 (top) shows that sometimes matching of query and support may be as easy as rotating one trajectory onto another, in order to achieve viewpoint invariance. A viewpoint invariant distance (Haasdonk & Burkhardt, 2007) can be defined as:

where T is a set of transformations required to achieve a viewpoint invariance, \(d(\cdot ,\cdot )\) is some base distance, e.g., the Euclidean distance, and \(\varvec{\varPsi }\) and \(\varvec{\varPsi }'\) are features describing query and support pair of sequences. Typically, T may include 3D rotations to rotate one trajectory onto the other. However, a global viewpoint alignment of two sequences is suboptimal. Trajectories are unlikely to be straight 2D lines in the 3D space so one may not be able to rotate the query trajectory to align with the support trajectory. Figure 4 (bottom) shows that the subjects’ poses locally follow complicated non-linear paths, e.g., as in Fig. 5 (bottom).

Thus, we propose JEANIE that aligns and warps query / support sequences based on the feature similarity. One can think of JEANIE as performing Eq. (1) with T containing all possible combinations of local time-warping augmentations of sequences and camera pose augmentations for each frame (or temporal block). JEANIE unit in Fig. 3 realizes such a strategy. Figure 6 (discussed later in the text) shows one step of the temporal-viewpoint computations of JEANIE in search for optimal temporal-viewpoint alignment path between query and support sequences. Soft-minimum across all such possible alignment paths can be equivalently written as an infimum over a set of specific transformations in Eq. (1).

Below, we detail our pipeline, and explain the proposed JEANIE, Encoding Network (EN), feature coding and dictionary learning, and our loss function. Firstly, we present our notations.

Notations. \({\mathcal {I}}_{K}\) stands for the index set \(\{1,2,...,K\}\). Concatenation of \(\alpha _i\) is denoted by \([\alpha _i]_{i\in {\mathcal {I}}_{I}}\), whereas \({\textbf{X}}_{:,i}\) means we extract/access column i of matrix \({\varvec{D}}\). Calligraphic mathcal fonts denote tensors (e.g., \(\varvec{{\mathcal {D}}}\)), capitalized bold symbols are matrices (e.g., \({\varvec{D}}\)), lowercase bold symbols are vectors (e.g., \(\varvec{\psi }\)), and regular fonts denote scalars.

Prerequisites. Below we refer to prerequisites used in the subsequent chapters. Appendix A explains how Euler angles and stereo projections are used in simulating different skeleton viewpoints. Appendix B explains several GNN approaches that we use in our Encoding Network.

Appendix C explains several feature coding and dictionary learning strategies which we use for unsupervised FSAR.

3.1 Encoding Network (EN)

We start by generating \(K\!\times \!K'\) Euler rotations or \(K\!\times \!K'\) simulated camera views (moved gradually from the estimated camera location) of query skeletons. Our EN contains a simple 3-layer MLP unit (FC, ReLU, FC, ReLU, Dropout, FC), GNN, optional Transformer (Dosovitskiy et al., 2020) and FC. The MLP unit takes M neighboring frames, each with J 3D skeleton body joints, forming one temporal block \({\textbf{X}}\!\in \!{\mathbb {R}}^{3\times J\times M}\), where 3 indicates 3D Cartesian coordinates. In total, depending on stride S, we obtain some \(\tau \) temporal blocks which capture the short temporal dependency, whereas the long temporal dependency is modeled with our JEANIE. Each temporal block is encoded by the MLP into a \(d\!\times \!J\) dimensional feature map:

We obtain \(K\!\times \!K'\!\times \!\tau \) query and \(\tau '\) support feature maps, each of size \(J\times d\). Each maps is forwarded to a GNN. For S\(^2\)GC (Zhu & Koniusz, 2021) (default GNN in our work) with L layers, we have:

JEANIE (1-max shift). We loop over all points. At \((t,t',n)\) (green point) we add its base distance to the minimum of accumulated distances at \((t,t'\!\!-\!1,n\!-\!1)\), \((t,t'\!\!-\!1,n)\), \((t,t'\!\!-\!1,n\!+\!1)\) (orange plane), \((t\!-\!1,t'\!\!-\!1,n\!-\!1)\), \((t\!-\!1,t'\!\!-\!1,n)\), \((t\!-\!1,t'\!\!-\!1,n\!+\!1)\) (red plane) and \((t\!-\!1,t'\!,n\!-\!1)\), \((t\!-\!1,t'\!,n)\), \((t\!-\!1,t'\!,n\!+\!1)\) (blue plane) (Color figure online)

where \(\textbf{S}\) is the adjacency matrix capturing connectivity of body joints, whereas \(0\le \alpha \le 1\) controls the self-importance of each body joint. Appendix B describes several GNN variants we experimented with: GCN (Kipf amb Welling, 2017), SGC (Wu et al., 2019), APPNP (Klicpera et al., 2019) and S\(^2\)GC (Zhu & Koniusz, 2021).

Optionally, a transformerFootnote 2 (described below in “Transformer Encoder”) may be used. Finally, an FC layer returns \(\varvec{\varPsi }\!\in \!{\mathbb {R}}^{d'\times K\times K'\times \tau }\) query feature maps and \(\varvec{\varPsi }'\!\in \!{\mathbb {R}}^{d'\times \tau '}\!\) support feature maps. Feature maps are passed to JEANIE whose output is passed into the similarity classifier. The whole Encoding Network is summarized as follows. Let support maps \(\varvec{\varPsi }'\!\equiv \![f({\varvec{X}}'_1;{\mathcal {F}}),...,f({\varvec{X}}'_{\tau '};{\mathcal {F}})]\!\in \!{\mathbb {R}}^{d'\times \tau '}\) and query maps \(\varvec{\varPsi }\!\equiv \![f({\varvec{X}}_{1,1,1};{\mathcal {F}}),...,f({\varvec{X}}_{K,K',\tau };{\mathcal {F}})]\!\in \!{\mathbb {R}}^{d'\times K\times K'\times \tau }\). For M query and M support frames per block, \({\textbf{X}}\!\in \!{\mathbb {R}}^{3\times J\times M}\) and \({\textbf{X}}'\!\in \!{\mathbb {R}}^{3\times J\times M}\). We also define:

where \({\mathcal {F}}\!\equiv \![{\mathcal {F}}_{MLP},{\mathcal {F}}_{GNN},{\mathcal {F}}_{Tr},{\mathcal {F}}_{FC}]\) is the set of parameters of EN (including an optional transformer).

Transformer Encoder. Vision transformer (Dosovitskiy et al., 2020) consists of alternating layers of Multi-Head Self-Attention (MHSA) and a feed-forward MLP (2 FC layers with a GELU non-linearity intertwined). LayerNorm (LN) is applied before every block, and residual connections after every block. If transformer is used, each feature matrix \({\widehat{{\textbf{X}}}} \in {\mathbb {R}}^{J \times {d}}\) per temporal block is encoded by a GNN into \(\widehat{{\widehat{{\textbf{X}}}}} \in {\mathbb {R}}^{J \times {d}}\) and then passed to the transformer. Similarly to the standard transformer, we prepend a learnable vector \(\textbf{y}_\text {token}\!\in \!{\mathbb {R}}^{1\times {d}}\) to the sequence of block features \({\widehat{{\textbf{X}}}}\) obtained from GNN, and we also add the positional embeddings \(\textbf{E}_\text {pos} \in {\mathbb {R}}^{(1+J) \times {d}}\) based on the standard sine and cosine functions so that token \(\textbf{y}_\text {token}\) and each body joint enjoy their own unique positional encoding. One can think of our GNN block as replacing the tokenizer linear projection layer of a standard transformer. Compared to the use of FC layer as linear projection layer, our GNN tokenizer in Eq. (5) enjoys (i) better embeddings of human body joints based on the graph structure (ii) no learnable parameters. From the tokenizer, we obtain \({\textbf{Z}}_0\!\in \!{\mathbb {R}}^{(1+J)\times {d}}\):

and feed it into in the following transformer backbone:

where \({\textbf{Z}}^{(0)}_{L_\text {tr}}\) is the first d-dimensional row vector extracted from the output matrix \({\textbf{Z}}_{L_\text {tr}}\!\in \!{\mathbb {R}}^{(J\!+\!1)\times {d}}\), and \(L_\text {tr}\) controls the depth of the transformer (the number of layers), whereas \({\mathcal {F}}\!\equiv \![{\mathcal {F}}_{MLP},{\mathcal {F}}_{GNN},{\mathcal {F}}_{Tr},{\mathcal {F}}_{FC}]\) is the set of parameters of EN. Finally, \(f({\textbf{X}}; {\mathcal {F}})\) from Eq. (9) becomes equivalent of Eq. (4) with the transformer.

3.2 JEANIE

Prior to explaining the details of the JEANIE measure, we briefly explain details of soft-DTW.

Soft-DTW (Cuturi, 2011; Cuturi & Blondel, 2017). Dynamic Time Warping can be seen as a specialized “metric” with a matching transportation planFootnote 3 acting on the temporal mode of sequences. Soft-DTW is defined as:

The binary \({\textbf{A}}\!\in \!{\mathcal {A}}_{\tau ,\tau '}\) encodes a path within the transportation plan \({\mathcal {A}}_{\tau ,\tau '}\) which depends on lengths \(\tau \) and \(\tau '\) of sequences \(\varvec{\varPsi }\!\equiv \![\varvec{\psi }_1,...,\varvec{\psi }_\tau ]\!\in \!{\mathbb {R}}^{d'\times \tau }\), \(\varvec{\varPsi }'\!\equiv \![{\varvec{\psi }'}_1,...,{\varvec{\psi }'}_{\tau '}]\!\in \!{\mathbb {R}}^{d'\times \tau '}\). \({\varvec{D}}\!\in \!{\mathbb {R}}_{+}^{\tau \times \tau '}\!\!\equiv \![d_{\text {base}}(\varvec{\psi }_m,\varvec{\psi }'_n)]_{(m,n)\in {\mathcal {I}}_{\tau }\times {\mathcal {I}}_{\tau '}}\) is the matrix of distances, evaluated for \(\tau \!\times \!\tau '\) frames (or temporal blocks) according to some base distance \(d_{\text {base}}(\cdot ,\cdot )\), i.e., the Euclidean distance.

In what follows, we make use of principles of soft-DTW, i.e., the property of time-warping. However, we design a joint alignment between temporal skeleton sequences and simulated skeleton viewpoints, which means we achieve joint time-viewpoint warping (a novel idea never done before).

JEANIE. Matching query-support pairs requires temporal alignment due to potential offset in locations of discriminative parts of actions, and due to potentially different dynamics/speed of actions taking place. The same concerns the direction of actor’s pose, i.e., consider the pose trajectory w.r.t. the camera. Thus, the JEANIE measure is equipped with an extended transportation plan \({\mathcal {A}}'\!\equiv \!{\mathcal {A}}_{\tau ,\tau ', K, K'}\), where apart from temporal block counts \(\tau \) and \(\tau '\), for query sequences, we have possible \(\eta _{az}\) left and \(\eta _{az}\) right steps from the initial camera azimuth, and \(\eta _{alt}\) up and \(\eta _{alt}\) down steps from the initial camera altitude. Thus, \(K\!=\!2\eta _{az}\!+\!1\), \(K'\!=\!2\eta _{alt}\!+\!1\). For the variant with Euler angles, we simply have \({\mathcal {A}}''\!\equiv \!{\mathcal {A}}_{\tau ,\tau ', K, K'}\) where \(K\!=\!2\eta _{x}\!+\!1\), \(K'\!=\!2\eta _{y}\!+\!1\) instead. The JEANIE formulation is given as:

where \(\varvec{{\mathcal {D}}}\!\in \!{\mathbb {R}}_{+}^{K\times \!K'\!\times \tau \times \tau '}\!\!\!\equiv \![d_{\text {base}}(\varvec{\psi }_{m,k,k'},\varvec{\psi }'_n)]_{\begin{array}{c} (m,n)\in {\mathcal {I}}_{\tau }\!\times \!{\mathcal {I}}_{\tau '}\\ (k,k')\in {\mathcal {I}}_{K}\!\times \!{\mathcal {I}}_{K'} \end{array}},\!\!\!\!\!\!\) and tensor \(\varvec{{\mathcal {D}}}\) contains distances evaluated between all possible temporal blocks.

Figure 6 illustrates one step of JEANIE. Suppose the given viewing angle set is \(\{-40^{\circ }, -20^{\circ }, 0^{\circ }, 20^{\circ }, 40^{\circ }\}\). For the current node at \((t,t'\!,n)\) we evaluate, we have to aggregate its base distance with the smallest aggregated distance of its predecessor nodes. The “1-max shift” means that the predecessor node must be a direct neighbor of the current node (imagine that dots on a 3D grid are nodes connected by links). Thus, for 1-max shift, at location \((t,t'\!,n)\), we extract the node’s base distance and add it together with the minimum of aggregated distances at the shown 9 predecessor nodes. We store that aggregated distance at \((t,t'\!,n)\), and we move to the next node. Note that for viewpoint index n, we look up \((n\!-\!1,n,n\!+\!1)\) neighbors. Extension to the \(\iota \)-max shift is straightforward. The importance of low value of \(\iota \)-max shift, e.g., \(\iota =1\) is that low value of \(\iota \) promotes the so-called smoothness of alignment. That is, while time or viewpoint may be warped, they are not warped abruptly (e.g., the subject’s pose is not allowed to suddenly rotate by \(90^{\circ }\) in one step then rotate back by \(-90^{\circ }\). This smoothness is the key preventing greedy matching that would result in an overoptimistic distance between two sequences.

Algorithm 1 illustrates JEANIE. For brevity, let us tackle the camera viewpoint alignment along the azimuth, e.g., for some shifting steps \(-\eta ,...,\eta \), each with size \(\varDelta \theta _{az}\). The maximum viewpoint change from block to block is \(\iota \)-max shift (smoothness). As we have no way to know the initial optimal camera shift, we initialize all possible origins of shifts in accumulator \(r_{n,1,1}\!=\!d_{\text {base}}(\varvec{\psi }_{n,1}, \varvec{\psi }'_{1})\) for all \(n\!\in \!\{-\eta , ..., \eta \}\). Subsequently, steps related to soft-DTW (temporal-viewpoint matching) take place. Finally, we choose the path with the smallest distance over all possible viewpoint ends by selecting a soft-minimum over \([r_{n,\tau ,\tau '}]_{n\in \{-\eta , ..., \eta \}}\). Notice that elements of the accumulator tensor \(\varvec{{\mathcal {R}}}\in {\mathbb {R}}^{(2\iota +1)\times \tau \times \tau '}\) are accessed by writing \(r_{n,t,t'}\). Moreover, whenever either index \(n\!-\!i\), \(t\!-\!j\) or \(t'\!-\!k\) in \(r_{n\!-\!i,t\!-\!j,t'\!-\!k}\) (see algorithm) is out of bounds, we define \(r_{n\!-\!i,t\!-\!j,t'\!-\!k}=\infty \).

A comparison of paths in 3D for soft-DTW, Free Viewpoint Matching (FVM) and our JEANIE. For a given query skeleton sequence (green color), we choose viewing angles between \(-45^\circ \) and \(45^\circ \) for the camera viewpoint simulation. The support skeleton sequence is shown in black color. a soft-DTW finds each individual alignment per viewpoint fixed throughout alignment: \(d_\text {shortest}\!=\!4.08\). Notice that each path “stays” within the same view–it does not cross into other views. b FVM is a greedy matching algorithm that in each time step seeks the best alignment pose from all viewpoints which leads to unrealistic zigzag path (person cannot jump from front to back view suddenly): \(d_\text {FVM}\!=\!2.53\). c Our JEANIE (1-max shift) is able to find smooth joint viewpoint-temporal alignment between support and query sequences. We show each optimal path for each possible starting position: \(d_\text {JEANIE}\!=\!3.69\). While \(d_\text {FVM}\!=\!2.53\) for FVM is overoptimistic, \(d_\text {shortest}\!=\!4.08\) for fixed-view matching is too pessimistic, whereas JEANIE strikes the right matching balance with \(d_\text {JEANIE}\!=\!3.69\) (Color figure online)

Free Viewpoint Matching (FVM). To ascertain whether JEANIE is better than performing separately the temporal and simulated viewpoint alignments, we introduce an important and plausible baseline called Free Viewpoint Matching. FVM, for every step of DTW, seeks the best local viewpoint alignment, thus realizing a non-smooth temporal-viewpoint path in contrast to JEANIE. To this end, we apply soft-DTW in Eq. (12) with the base distance replaced by:

where \(\varvec{\varPsi }\!\in \!{\mathbb {R}}^{d'\times K\times K'\times \tau }\) and \(\varvec{\varPsi }'\!\in \!{\mathbb {R}}^{d'\times K\times K'\times \tau '}\!\) are query and support feature maps. We abuse slightly the notation by writing \(d_{\text {FVM}(\varvec{\psi }_{t},\varvec{\psi }'_{t'})}\) as we minimize over viewpoint indexes inside of Eq. (13). Thus, we calculate the distance matrix \({\varvec{D}}\!\in \!{\mathbb {R}}_{+}^{\tau \times \tau '}\!\!\equiv \![d_{\text {FVM}}(\varvec{\psi }_t,\varvec{\psi }'_{t'})]_{(t,t')\in {\mathcal {I}}_{\tau }\times {\mathcal {I}}_{\tau '}}\) for soft-DTW.

Figure 7 shows the comparison between soft-DTW (view-wise), FVM and our JEANIE. FVM is a greedy matching method which leads to complex zigzag path in 3D space (we illustrate the camera viewpoint in a single mode, e.g., the azimuth for \(\varvec{\psi }_{n,t}\), and no viewpoint mode for \(\varvec{\psi }'_{t'}\)). Although FVM is able to produce the path with a smaller aggregated distance compared to soft-DTW and JEANIE, it suffers from obvious limitations: (i) It is unreasonable for poses in a given sequence to match under extreme sudden changes of viewpoints. (ii) Even if two sequences are from two different classes, FVM still yields the smallest distance (decreased inter-class variance).

3.3 Loss Function for Supervised FSAR

For the N-way Z-shot problem, we have one query feature map and \(N\!\times \!Z\) support feature maps per episode. We form a mini-batch containing B episodes. Thus, we have query feature maps \(\{\varvec{\varPsi }_b\}_{b\in {\mathcal {I}}_{B}}\) and support feature maps \(\{\varvec{\varPsi }'_{b,n,z}\}_{\begin{array}{c} b\in {\mathcal {I}}_{B}\\ n\in {\mathcal {I}}_{N}\\ z\in {\mathcal {I}}_{Z} \end{array}}\). Moreover, \(\varvec{\varPsi }_b\) and \(\varvec{\varPsi }'_{b,1,:}\) share the same class, one of N classes drawn per episode, forming the subset \(C^{\ddagger } \equiv \{c_1,...,c_N \} \subset {\mathcal {I}}_C \equiv {\mathcal {C}}\).

Specifically, labels \(y(\varvec{\varPsi }_b)\!=\!y(\varvec{\varPsi }'_{b,1,z}), \forall b\!\in \!{\mathcal {I}}_{B}, z\!\in \!{\mathcal {I}}_{Z}\) while \(y(\varvec{\varPsi }_b)\!\ne \!y(\varvec{\varPsi }'_{b,n,z}), \forall b\!\in \!{\mathcal {I}}_{B},n\!\in \!{\mathcal {I}}_{N}\!{\setminus }\!\{1\}, z\!\in \!{\mathcal {I}}_{Z}\). In most cases, \(y(\varvec{\varPsi }_b)\!\ne \!y(\varvec{\varPsi }_{b'})\) if \(b\!\ne \!b'\) and \(b,b'\!\in \!{\mathcal {I}}_{B}\). Selection of \(C^{\ddagger }\) per episode is random. For the N-way Z-shot protocol, we minimize:

and \({\varvec{d}}^+\) is a set of within-class distances for the mini-batch of size B given N-way Z-shot learning protocol. By analogy, \({\varvec{d}}^-\) is a set of between-class distances. Function \(\mu (\cdot )\) is simply the mean over coefficients of the input vector, \(\{\cdot \}\) detaches the graph during the backpropagation step, whereas \(TopMin_\beta (\cdot )\) and \(TopMax_{NZ\beta }(\cdot )\) return \(\beta \) smallest and \(NZ\beta \) largest coefficients from the input vectors, respectively. Thus, Eq. (14) promotes the within-class similarity while Eq. (15) reduces the between-class similarity. Integer \(\beta \!\ge \!0\) controls the focus on difficult examples, e.g., \(\beta \!=\!1\) encourages all within-class distances in Eq. (14) to be close to the positive target \(\mu (TopMin_\beta (\cdot ))\), the smallest observed within-class distance in the mini-batch. If \(\beta \!>\!1\), this means we relax our positive target. By analogy, if \(\beta \!=\!1\), we encourage all between-class distances in Eq. (15) to approach the negative target \(\mu (TopMax_{NZ\beta }(\cdot ))\), the average over the largest NZ between-class distances. If \(\beta \!>\!1\), the negative target is relaxed.

3.4 Feature Coding and Dictionary Learning for Unsupervised FSAR

Recall from Sect. 1 that unsupervised FSAR forms a dictionary from the training data without the use of labels. Assigning labeled test support samples and test query into cells of a dictionary lets infer the query label by associating query with the support sample (to paraphrase, if they share the same dictionary cell, they share the class label).

In this setting, we also use a mini-batch with B episodes. Thus, B query samples and BNZ support samples give the total of \(N'\!=\!B(NZ\!+\!1)\) samples per batch for feature coding and dictionary learning. Let dictionary \({\varvec{M}}\!\in \!{\mathbb {R}}^{d'\cdot \tau ^* \times k}\) and dictionary-coded matrix \(\varvec{A}\equiv [\varvec{\alpha }_1,...,\varvec{\alpha }_{N'}] \!\in \! {\mathbb {R}}^{k \times N'}\). Let \(\tau ^*\) be set as the average number of temporal blocks over training sequences. For dictionary \({\varvec{M}}\) and some codes \(\varvec{A}\), the reconstructed feature map is given as \({\varvec{M}}\varvec{A}\in {\mathbb {R}}^{d' \cdot \tau ^* \times N'}\). In what follows we reshape the reconstructed feature map so that \({\varvec{M}}\varvec{A}\in {\mathbb {R}}^{d' \times \tau ^* \times N'}\). The feature map per sequence is given as \(\varvec{\varPsi }\!\in \! {\mathbb {R}}^{d'\times K\times K'\times \tau \times N'}\). All query and support sequences per batch form a set \( \varUpsilon \equiv \{\varvec{\varPsi }_{b}\}_{b\in {\mathcal {I}}_{B}}\cup \{\varvec{\varPsi }'_{b,n,z}\}_{\begin{array}{c} b\in {\mathcal {I}}_{B}\\ n\in {\mathcal {I}}_{N}\\ z\in {\mathcal {I}}_{Z} \end{array}}\) with \(N'\) feature maps which we select by writing \(\varvec{\varPsi }_{i}\in \varUpsilon \) where \(i=1,...,N'\). They are obtained from Encoding Network the same way as for supervised FSAR except that both query and support sequences now are equipped with \(K\times K'\) viewpoints. Algorithm 2 and Fig. 8 illustrate unsupervised FSAR learning with JEANIE. In short, we minimize the following loss w.r.t. \({\mathcal {F}}\), \({\varvec{M}}\) and \(\varvec{A}\) by alternating over these variables:

where \({\mathcal {F}}\!\equiv \![{\mathcal {F}}_{MLP},{\mathcal {F}}_{GNN},{\mathcal {F}}_{Tr},{\mathcal {F}}_{FC}]\) is the set of parameters of EN associated with \(\varvec{\varPsi }\), that is, feature maps depend on these parameters, i.e., we work with a function \(\varvec{\varPsi }({\mathcal {F}})\) not a constant.

Unsupervised FSAR uses the JEANIE measure as a distance between feature map \(\varvec{\Phi }\) of a sequence and its dictionary-based reconstruction \({\varvec{M}}\varvec{\alpha }\). LcSA performs feature coding to obtain dictionary-coded \(\varvec{\alpha }\). DL learns the dictionary \({\varvec{M}}\)

Similarly to the Euclidean distance, \(d_{\text {JEANIE}}(\cdot ,\cdot )\) in Eq. (16) pursues the reconstruction of the feature map \(\varvec{\varPsi }_i\) by the linear combination of dictionary codewords, given as \({\varvec{M}}\varvec{\alpha }_i\). The reconstruction error \(d^2_{\text {JEANIE}}(\varvec{\varPsi }_i, {\varvec{M}}\varvec{\alpha }_i)\) is encouraged to be small. However, unlike the Euclidean distance, JEANIE ensures temporal and viewpoint alignment of sequences \(\varvec{\varPsi }_i\) with the dictionary-based reconstruction \({\varvec{M}}\varvec{\alpha }_i\). Constraint \(\varvec{\Omega }(\varvec{\alpha }_i, {\varvec{M}}, \varvec{\varPsi }_i)\) is a regularization term depending on the selection of feature coding method. Such a regularization encourages discriminative description, i.e., similar and different feature vectors obtain similar and different dictionary-coded representations, respectively. Appendix C provides details of several feature coding and dictionary learning strategies which determine \(\varvec{\Omega }\). In our work, the default choice is Soft Assignment and Dictionary learning from Appendices C.1 and C.2 due to their simplicity and good performance. As the Soft Assignment code (Ni et al., 2022) was adapted to use JEANIE, we kept their number of iterations \(\texttt {alpha\_iter}\!=\!50\), \(\texttt {dic\_iter}\!=\!5\). Dictionary size \(k\!=\!4096\) was optimal, whereas \(\tau ^*\) ranged between 30 and 60 for smaller and larger datasets, respectively.

During testing, we use the trained model \({\mathcal {F}}\) and the learnt dictionary \({\varvec{M}}\), pass test support and query sequences via Eq. (16) but solve only w.r.t. \(\varvec{A}\) by till \(\varvec{A}\) converges. Subsequently, we compare the dictionary-coded vectors of query sequences with the corresponding dictionary-coded vectors of support sequences by using some distance measure, e.g., the \(\ell _1\) or \(\ell _2\) norm. We also explore the use of kernel-based distances, e.g., Histogram Intersection Kernel (HIK) distance and Chi-Square Kernel (CSK) distance, as they are designed for comparing vectors constrained on the \(\ell _1\) simplex (Soft Assignment produces the \(\ell _1\) normalised codes \(\varvec{\alpha }\)). The construction of the kernel distance involves a transformation from similarities to distances.

Let \(\varvec{\alpha }\) and \(\varvec{\alpha }'\) be some dictionary-coded vectors obtained by the use of JEANIE in Eq. (16). Then for a kernel function \(k(\varvec{\alpha }, \varvec{\alpha }')\), the induced distance between \(\varvec{\alpha }\) and \(\varvec{\alpha }'\) is given by

\( d(\varvec{\alpha }, \varvec{\alpha }') = k(\varvec{\alpha }, \varvec{\alpha }) + k(\varvec{\alpha }', \varvec{\alpha }') - 2k(\varvec{\alpha }, \varvec{\alpha }')\). Let \(\Vert \varvec{\alpha }\Vert _2\!=\!\Vert \varvec{\alpha }'\Vert _2\!=\!1\). The HIK distance for \(k_\text {HIK}(\varvec{\alpha }, \varvec{\alpha }')\!=\!\sum _{i=1}^{d'}\text {min}(\alpha _i, \alpha '_i)\) is given as \(d_\text {HIK}(\varvec{\alpha }, \varvec{\alpha }')\! = \!2-2k_\text {HIK}(\varvec{\alpha }, \varvec{\alpha }')\). The CSK distance for kernel \(k_\text {CSK}(\varvec{\alpha }, \varvec{\alpha }')\!=\!\sum _{i=1}^{d'}\!\frac{2\alpha _i \alpha '_i}{\alpha _i + \alpha '_i}\) is \(d_\text {CSK}(\varvec{\alpha }, \varvec{\alpha }')\! = \!2\!-\!2k_\text {CSK}(\varvec{\alpha }, \varvec{\alpha }')\).

The closest nearest neighbor match of test query to elements of the test support set determines the test label of the query sequence.

3.5 Fusion of Supervised and Unsupervised FSAR

Our final contribution is to introduce four simple strategies for fusing our supervised and unsupervised FSAR approaches to boost the performance. As supervised learning is label-driven and unsupervised learning is reconstruction-driven, we expect both such strategies produce complementary feature spaces amenable to fusion.

In what follows, we make use of both support and query feature maps defined over multiple viewpoints (\(\varvec{\varPsi },\varvec{\varPsi }'\!\in \!{\mathbb {R}}^{d'\times K\times K'\times \tau }\)):

A weighted fusion of supervised and unsupervised FSAR scores. The simplest strategy is to train supervised and unsupervised FSAR models separately, and combine their predictions during testing. We call such a baseline as “weighted fusion”. During the testing stage, we combine the distances of supervised and unsupervised models as follows:

where \(d_{\alpha }(\cdot , \cdot )\) is the distance measure for dictionary-encoded vectors, e.g., the \(\ell _1\) norm, HIK distance or CSK distance, \(0\le \rho \le 1\) balances the impact of supervised and unsupervised models, respectively.

Finetuning unsupervised model by supervised FSAR. For this baseline strategy, we firstly train the model using unsupervised FSAR, and then we finetune the learnt unsupervised model by using supervised FSAR. During testing stage, we evaluate on supervised learning, unsupervised learning and a fusion of both based on Eq. (17). In this case, one EN is trained which results in two sets of parameters–the first set is based on unsupervised training and the second set is based on supervised finetuning. We call it “finetuning unsup.”

MAML-inspired fusion of supervised and unsupervised FSAR. Inspired by the success of MAML (Finn et al., 2017) and categorical learner (Li et al., 2023b), we introduce a fusion strategy where the inner loop uses the unsupervised FSAR (Eq. (16)) and the outer loop uses the supervised learning loss (Eq. (14) and (15)) for the model update. Algorithm 3 details our MAML-inspired fusion strategy, called “MAML-inspired fusion”.

Specifically, we start by generating representations with several viewpoints. For each mini-batch of size B we form a set with \(N'\) feature maps which are passed to Algorithm 2 which updates EN parameters \({\mathcal {F}}\) towards \(\widehat{{\mathcal {F}}}\) that help accommodate unsupervised reconstruction-driven learning (so-called task-specific gradient where the task is unsupervised learning). We then recompute \(N'\) feature maps based on parameters \(\widehat{{\mathcal {F}}}\). Finally, we apply supervised loss on such feature maps but we update now parameters \({{\mathcal {F}}}\) which means that parameters \({{\mathcal {F}}}\) are tuned for the global label-driven task with help of unsupervised task.

Intuitively, it is a second-order gradient model. Specifically, one takes the gradient step in the direction pointed by the unsupervised loss to obtain task-specific EN parameters. Subsequently, given these task-specific parameters, task-specific feature maps are extracted and passed into the supervised loss to perform the gradient descent step in the direction pointed by the unsupervised loss to obtain update of global EN parameters.

Fusion by alignment of supervised and unsupervised feature maps. Inspired by domain adaptation (Koniusz et al., 2017, 2018; Tas & Koniusz, 2018), Algorithm 4 in Appendix D is an easy-to-interpret simplification (called “adaptation-based”) of the above MAML-inspired fusion. Instead of complex gradient interplay between unsupervised and supervised loss functions, we explicitly align “supervised” feature maps towards “unsupervised” feature maps.

4 Experiments

4.1 Datasets and Protocols

Below, we describe the datasets and evaluation protocols on which we validate our FSAR with JEANIE.

-

i.

UWA3D Multiview Activity II (Rahmani et al., 2016) contains 30 actions performed by 9 people in a cluttered environment. The Kinect camera was used in 4 distinct views: front view (\(V_1\)), left view (\(V_2\)), right view (\(V_3\)), and top view (\(V_4\)).

-

ii.

NTU RGB+D (NTU-60) (Shahroudy et al., 2016) contains 56,880 video sequences and over 4 million frames. This dataset has variable sequence lengths and high intra-class variations.

-

iii.

NTU RGB+D 120 (NTU-120) (Liu et al., 2019) contains 120 action classes (daily/health-related), and 114,480 RGB+D video samples captured with 106 distinct human subjects from 155 different camera viewpoints.

-

iv.

Kinetics (Kay et al., 2017) is a large-scale collection of 650,000 video clips that cover 400/600/700 human action classes. It includes human-object interactions such as playing instruments, as well as human-human interactions such as shaking hands and hugging. As the Kinetics-400 dataset provides only the raw videos, we follow approach (Yan et al., 2018) and use the estimated joint locations in the pixel coordinate system as the input to our pipeline. To obtain the joint locations, we first resize all videos to the resolution of 340 \(\times \) 256, and convert the frame rate to 30 FPS. Then we use the publicly available OpenPose (Cao et al., 2017) toolbox to estimate the location of 18 joints on every frame of the clips. As OpenPose produces the 2D body joint coordinates and Kinetics-400 does not offer multi-view or depth data, we use a network of Martinez et al. (Martinez et al., 2017) pre-trained on Human3.6M (Ionescu et al., 2014), combined with the 2D OpenPose output to estimate 3D coordinates from 2D coordinates. The 2D OpenPose and the latter network give us (x, y) and z coordinates, respectively.

Evaluation protocols. For the UWA3D Multiview Activity II, we use standard multi-view classification protocol (Rahmani et al., 2016; Wang et al., 2020b), but we apply it to one-shot learning as the view combinations for training and testing sets are disjoint. For NTU-120, we follow the standard one-shot protocol (Liu et al., 2019). Based on this protocol, we create a similar one-shot protocol for NTU-60, with 50/10 action classes used for training/testing respectively. To evaluate the effectiveness of the proposed method on viewpoint alignment, we also create two new protocols on NTU-120, for which we group the whole dataset based on (i) horizontal camera views into left, center and right views, (ii) vertical camera views into top, center and bottom views. We conduct two sets of experiments on such disjoint view-wise splits (i) (100/same 100): using 100 action classes for training, and testing on the same 100 action classes (ii) (100/novel 20): training on 100 action classes but testing on the rest unseen 20 classes. Appendix H provides more details of training/evaluation protocols (subject splits, etc.) for small-scale datasets as well as the large scale Kinetics-400 dataset.

Stereo projections. For simulating different camera viewpoints, we estimate the fundamental matrix \(\textbf{F}\) (Eq. (19)), which relies on camera parameters. Thus, we use the Camera Calibrator from MATLAB to estimate intrinsic, extrinsic and lens distortion parameters. For a given skeleton dataset, we compute the range of spatial coordinates x and y, respectively. We then split them into 3 equally-sized groups to form roughly left, center, right views and other 3 groups for bottom, center, top views. We choose \(\sim \)15 frame images from each corresponding group, upload them to the Camera Calibrator, and export camera parameters. We then compute the average distance/depth and height per group to estimate the camera position. On NTU-60 and NTU-120, we simply group the whole dataset into 3 cameras, which are left, center and right views, as provided in Liu et al. (2019), and then we compute the average distance per camera view based on the height and distance settings given in the table in Liu et al. (2019).

4.2 Ablation Studies

We start our experiments by investigating various architectural choices and key hyperparameters of our model.

Camera viewpoint simulations. We choose 15 degrees as the step size for the viewpoints simulation. The ranges of camera azimuth and altitude are in [\(-90^\circ \), \(90^\circ \)]. Where stated, we perform a grid search on camera azimuth and altitude with Hyperopt (Bergstra et al., 2015). Below, we explore the choice of the angle ranges for both horizontal and vertical views. Figure 9a, b (evaluations on the NTU-60 dataset) show that the angle range \([-45^\circ , 45^\circ ]\) performs the best, and widening the range in both views does not increase the performance any further. Table 1 shows results for the chosen range \([-45^\circ ,45^\circ ]\) of camera viewpoint simulations. (Euler simple (\(K\!+\!K'\))) denotes a simple concatenation of features from both horizontal and vertical views, whereas (Euler (\(K\!\times \!K'\))) and (CamVPC(\(K\!\times \!K'\))) represent the grid search of all possible views. The table shows that Euler angles for the viewpoint augmentation outperform (Euler simple), and (CamVPC) (viewpoints of query sequences are generated by the stereo projection geometry) outperforms Euler angles in almost all the experiments on NTU-60 and NTU-120. This proves the effectiveness of using the stereo projection geometry for the viewpoint augmentation.

Block size M and stride size S . Recall from Fig. 1, that each skeleton sequence is divided into short-term temporal blocks which may also partially overlap.

Table 2 shows evaluations w.r.t. block size M and stride S, and indicates that the best performance (both 50-class and 20-class settings) is achieved for smaller block size (frame count in the block) and smaller stride. Longer temporal blocks decrease the performance due to the temporal information not reaching the temporal alignment step of JEANIE. Our block encoder encodes each temporal block for learning the local temporal motions, and aggregate these block features finally to form the global temporal motion cues. Smaller stride helps capture more local motion patterns. Considering the accuracy-runtime trade-off, we choose \(M\!=\!8\) and \(S\!=\!0.6M\) for the remaining experiments.

GNN as a block of Encoding Network. Recall from Sect. 3.1 and Appendix B that our Encoding Network uses a GNN block. For that reason, we investigate several models with the goal of justifying our default choice.

We conduct experiments on 4 GNNs listed in Table 3. S\(^2\)GC performs the best on large-scale NTU-60 and NTU-120, APPNP outperforms SGC, and SGC outperforms GCN. We also notice that using GNN as a projection layer performs better than single FC layer used in standard transformer by \(\sim \)5%. We note that using the RBF-induced distance for \(d_{base}(\cdot ,\cdot )\) of JEANIE outperforms the Euclidean distance. We choose S\(^2\)GC as a block of our Encoding Network and we use the RBF-induced base distance for JEANIE and other DTW-based models.

\(\iota \)-max shift. Recall from Sect. 3.2 that the \(\iota \)-max controls the smoothness of alignment.

Table 4 shows the evaluations of \(\iota \) for the maximum shift. We notice that \(\iota \!=\!2\) yields the best results for all the experimental settings on both NTU-60 and NTU-120. Increasing \(\iota \) does not help improve the performance. We think \(\iota \) relies on (i) the speeds of action execution (ii) the temporal block size M and the stride S.

4.3 Implementation Details

Before we discuss our main experimental results, below we provide network configurations and training details.

Network configurations. Given the temporal block size M (the number of frames in a block) and desired output size d, the configuration of the 3-layer MLP unit is: FC (\(3\,M \rightarrow 6\,M\)), LayerNorm (LN) as in Dosovitskiy et al. (2020), ReLU, FC (\(6M \rightarrow 9M\)), LN, ReLU, Dropout (for smaller datasets, the dropout rate is 0.5; for large-scale datasets, the dropout rate is 0.1), FC (\(9M \rightarrow d\)), LN. Note that M is the temporal block size and d is the output feature dimension per body joint.

Transformer block. The hidden size of our transformer (the output size of the first FC layer of the MLP in Eq. (7)) depends on the dataset. For smaller scale datasets, the depth of the transformer is \(L_\text {tr}\!=\!6\) with 64 as the hidden size, and the MLP output size is \({d}\!=\!32\) (note that the MLP which provides \({\widehat{{\textbf{X}}}}\) and the MLP in the transformer must both have the same output size). For NTU-60, the depth of the transformer is \(L_\text {tr}\!=\!6\), the hidden size is 128 and the MLP output size is \({d}\!=\!64\). For NTU-120, the depth of the transformer is \(L_\text {tr}\!=\!6\), the hidden size is 256 and the MLP size is \({d}\!=\!128\). For Kinetics-skeleton, the depth for the transformer is \(L_\text {tr}\!=\!12\), hidden size is 512 and the MLP output size is \({d}\!=\!256\). The number of heads for the transformer of UWA3D Multiview Activity II, NTU-60, NTU-120 and Kinetics-skeleton is set as 6, 12, 12 and 12, respectively. The output size \(d'\) of the final FC layer in Eq. (9) are 50, 100, 200, and 500 for UWA3D Multiview Activity II, NTU-60, NTU-120 and Kinetics-skeleton, respectively.

Training details. The parameters (weights) of the pipeline are initialized with the normal distribution (zero mean and unit standard deviation). We use 1e-3 as the learning rate, and the weight decay is set to 1e-6. We use the SGD optimizer. We set the number of training episodes to 100K for NTU-60, 200K for NTU-120, 500K for 3D Kinetics-skeleton, and 10K for UWA3D Multiview Activity II. We use Hyperopt (Bergstra et al., 2015) for hyperparameter search on validation sets for all the datasets.

4.4 Discussion on Supervised Few-Shot Action Recognition

NTU-60. Table 5 (Sup.) shows that using the viewpoint alignment simultaneously in two dimensions, x and y for Euler angles, or azimuth and altitude the stereo projection geometry (CamVPC), improves the performance by 5–8% compared to (Euler) with a simple concatenation of viewpoints, a variant where the best viewpoint alignment path was chosen from the best alignment path along x and the best alignment path along y. Euler with (simple concat.) is better than Euler with y rotations only ((V) includes rotations along y while (2V) includes rotations along two axes). We indicate where temporal alignment (T) is also used. When we use HyperOpt (Bergstra et al., 2015) to search for the best angle range in which we perform the viewpoint alignment (CamVPC), the results improve further. Enabling the viewpoint alignment for support sequences (CamVPC) yields extra improvement, and our best variant of JEANIE boosts the performance by \(\sim \)2%.

We also show that aligning query and support trajectories by the angle of torso 3D joint, denoted (Traj. aligned) are not very powerful. We note that aligning piece-wise parts (blocks) is better than trying to align entire trajectories. In fact, aligning individual frames by torso to the frontal view (Each frame to frontal view) and aligning block average of torso direction to the frontal view (Each block to frontal view) were marginally better. We note these baselines use soft-DTW.

NTU-120. Table 6 (Sup.) shows that our proposed method achieves the best results on NTU-120, and outperforms the recent SL-DML and Skeleton-DML by 6.1% and 2.8% respectively (100 training classes). Note that Skeleton-DML requires the pre-trained model for the weights initialization whereas our proposed model with JEANIE is fully differentiable. For comparisons, we extended the view adaptive neural networks (Zhang et al., 2019) by combining them with ProtoNet (Snell et al., 2017). VA-RNN+VA-CNN (Zhang et al., 2019) uses 0.47M+24 M parameters with random rotation augmentations while JEANIE uses 0.25–0.5M parameters. Their rotation+translation keys are not proven to perform smooth optimal alignment as JEANIE. In contrast, \(d_\text {JEANIE}\) performs jointly a smooth viewpoint-temporal alignment with smoothness by design. They also use Euler angles which are a worse option (see Table 5 and 6) than the camera projection of JEANIE. We notice that ProtoNet+VA backbones is 12% worse than our JEANIE. Even if we split skeletons into blocks to let soft-DTW perform temporal alignment of prototypes & query, JEANIE is still 4–6% better. Notice also that JEANIE with transformer is between 3% and 6% better than JEANIE with no transformer, which validates the use of transformer on large datasets.

‘

Kinetics-skeleton. We evaluate our proposed model on both 2D and 3D Kinetics-skeleton. We follow the training and evaluation protocol in Appendix H. Table 7 shows that using 3D skeletons outperforms the use of 2D skeletons by 3− 4%. The temporal alignment only (with soft-DTW) outperforms baseline (without alignment) by \(\sim \)2% and 3% on 2D and 3D skeletons respectively, and JEANIE outperforms the temporal alignment only by around 5%. Our best variant with JEANIE further boosts results by 2%. We notice that the improvements for the use of camera viewpoint simulation (CamVPC) compared to the use of Euler angles are limited, around 0.3% and 0.6% for JEANIE and FVM respectively. The main reason is that the Kinetics-skeleton is a large-scale dataset collected from YouTube videos, and the camera viewpoint simulation becomes unreliable especially when videos are captured by multiple different devices, e.g., camera and mobile phone.

4.5 Discussion on Unsupervised Few-Shot Action Recognition

Recall from Sect. 3.4 that JEANIE can help train unsupervised FSAR by forming a dictionary that relies on temporal-viewpoint alignment of JEANIE which factors out nuisance temporal and pose variations in sequences.

However, the choice of feature coding and dictionary learning method can affect the performance of unsupervised learning. Thus, we investigate several variants from Appendix C.

Table 5 (Unsup.) and Table 11 in Appendix E (extension of Table 6 (Unsup.)) show on NTU-60 and NTU-120 that the LcSA coder performs better than SA by \(\sim \)0.6% and 1.5%, whereas SA outperforms LLC by \(\sim \)1.5% and 2%. As LcSA and SA are based on the non-linear sigmoid-like reconstruction functions, we suspect they are more robust than linear reconstruction function of LLC. Since the LcSA is the best performer in our experiments followed by SA and LLC or SC, we choose LcSA for further analysis.

Table 5 (Unsup.), and Tables 11 and 12 in Appendix E (extensions of Tables 6 (Unsup.) and 7 (Unsup.)) also show that the choose of different distance measures for comparing the dictionary-coded vectors of sequences during the test stage does not affect the performance by much. The kernel-induced distances, e.g., HIK distance and CSK distance and \(\ell _2\)-norm outperform the \(\ell _1\) norm by \(\sim \)0.5% on average. We choose the CSK distance for unsupervised JEANIE with LcSA as the default distance for comparing dictionary-coded vectors as it was marginally better performer in the majority of experiments.

Tables 5 (Unsup.), 6 (Unsup.) and 7 (Unsup.) show that unsupervised JEANIE (temporal-viewpoint alignment) outperforms soft-DTW (temporal alignment only) by up to 5%, 9% and 6% on NTU-60, NTU-120 and Kinetics-skeleton, respectively. Table 8 (Unsup.) shows that the biggest improvement is obtained when using unsupervised JEANIE on UWA3D Multiview Activity II dataset, with 10% performance gain. This outlines the importance of the joint temporal-viewpoint alignment under heavy camera pose variations.

Interestingly, FVM in unsupervised learning performs worse compared to our JEANIE, e.g., JEANIE suppresses FVM by \(\sim \)3%, 4% and 3% respectively on NTU-60, NTU-120 and Kinetics-skeleton in Tables 5 (Unsup.), 6 (Unsup.) and 7 (Unsup.). On UWA3D Multiview Activity II in Table 8 (Unsup.), JEANIE outperforms FVM by more than 5%. This is because FVM always seeks the best local viewpoint alignment for every step of soft-DTW which realizes a non-smooth temporal-viewpoint path in contrast to JEANIE. Without the guidance of label information, FVM fails to capture the corresponding relationships between each temporal and viewpoint alignment. Thus, FVM produces a worse dictionary than JEANIE which validates the need for factoring out jointly temporal and viewpoint nuisance variations from sequences.

Table 9 (Unsup.) shows that on our newly introduced multi-view classification protocol on NTU-120, for the unsupervised learning experiments, JEANIE outperforms the baseline (temporal alignment only with soft-DTW) by 7% and 8% on average on (100/same 100) and (100/novel 20) respectively. Moreover, JEANIE outperforms the FVM by around 4% and 3% on (100/same 100) and (100/novel 20) respectively.

4.6 Discussion on JEANIE and FVM

For supervised learning, JEANIE outperforms FVM by 2–4% on NTU-120, and outperforms FVM by around 6% on Kinetics-skeleton. For unsupervised learning, JEANIE improves the performance by around 3% on average on NTU-60, NTU-120 and Kinetics-skeleton. On UWA3D Multiview Activity II, JEANIE suppresses FVM by 4% and 5% respectively for supervised and unsupervised experiments. This shows that seeking jointly the best temporal-viewpoint alignment is more valuable than considering viewpoint alignment as a separate local alignment task (free range alignment per each step of soft-DTW). By and large, FVM often performs better than soft-DTW (temporal alignment only) by 3–5% on average.

To explain what makes JEANIE perform well on the task of comparing pairs of sequences, we perform some visualisations. To this end, we choose skeleton sequences from UWA3D Multiview Activity II for experiments and visualizations of FVM and JEANIE. UWA3D Multiview Activity II contains rich viewpoint configurations and so is perfect for our investigations. We verify that our JEANIE is able to find the better matching distances compared to FVM on two following scenarios.

Matching similar actions. We choose a walking skeleton sequence (‘a12_s01_e01_v01’) as the query sample with more viewing angles for the camera viewpoint simulation, and we select another walking skeleton sequence of a different view (‘a12_s01_e01_v03’) and a running skeleton sequence (‘a20_s01_e01_v02’) as support samples respectively.

Matching actions with similar motion trajectories. We choose a two hand punching skeleton sequence (‘a04_s01_e01_v01’) as the query sample with more viewing angles for the camera viewpoint simulation, and we select another two hand punching skeleton sequence of a different view (‘a04_s05_e01_v02’) and a holding head skeleton sequence (‘a10_s05_e01_v02’) as support samples respectively.

Figures 10 and 11 show the visualizations. Comparing Fig. 10a, b of FVM, we notice that for skeleton sequences from different action classes (walking vs. running), FVM finds the path with a very small distance \(d_\text {FVM}\!=\!2.68\). In contrast, for sequences from the same action class (walking vs. walking), FVM gives \(d_\text {FVM}\!=\!4.60\) which is higher than in case of within-class sequences. This is an undesired effect which may result in wrong comparison decision. In contrast, in Fig. 10c, d, our JEANIE gives \(d_\text {JEANIE}\!=\!8.57\) for sequences of the same action class and \(d_\text {JEANIE}\!=\!11.21\) for sequences from different action classes, which means that the within-class distances are smaller than between-class distances. This is a very important property when comparing pairs of sequences.

Figure 11 provides similar observations that JEANIE produces more reasonable matching distances than FVM.

Visualization of FVM and JEANIE for walking vs. walking (two different sequences) and walking vs. running. We notice that for two different action sequences in (b), the greedy FVM finds the path with a very small distance \(d_\text {FVM}\!=\!2.68\) but for sequences of the same action class, FVM gives \(d_\text {FVM}\!=\!4.60\). This is clearly suboptimal as the within-class distance is higher then the between-class distance (to counteract this issue, we propose JEANIE). In contrast, our JEANIE is able to produce a smaller distance for within-class sequences and a larger distance for between-class sequences, which is a very important property when comparing pairs of sequences

Visualization of FVM and JEANIE for two hand punching vs. two hand punching (two different sequences) and two hand punching vs. holding head. We notice that for two different action sequences in (b), the greedy FVM finds the path which results in \(d_\text {FVM}\!=\!1.63\) for sequences of different action classes, yet FVM gives \(d_\text {FVM}\!=\!1.95\) for two sequences of the same class. The within-class distance should be smaller than the between-class distance but greedy approaches such as FVM cannot handle this requirement well. JEANIE gives smaller distance when comparing within-class sequences compared to between-class sequences. This is very important for comparing sequences

4.7 Discussion on Multi-View Action Recognition

As mentioned in Sect. 4.5, JEANIE yields good results especially in unsupervised learning, with the performance gain over 5% on UWA3D Multiview Activity II and 4% on NTU-120 multi-view classification protocols. Below we discuss the multi-view supervised FSAR.

Table 8 (Sup.) shows that adding temporal alignment (with soft-DTW) to SGC, APPNP and S\(^2\)GC improves results on UWA3D Multiview Activity II, and the big performance gain is obtained via further adding the viewpoint alignment by JEANIE. Despite the dataset is challenging due to novel viewpoints, JEANIE performs consistently well on all different combinations of training/testing viewpoint settings. This is expected as our method aligns both temporal and camera viewpoint which allows a robust classification. JEANIE outperforms FVM by 4.2% and the baseline (temporal alignment only with soft-DTW) by 7% on average.

Influence of camera views has been explored in Wang (2017); Wang et al. (2020b) on UWA3D Multiview Activity II, and they show that when the left view \(V_2\) and right view \(V_3\) were used for training and front view \(V_1\) for testing, the recognition accuracy is high since the viewing angle of the front view \(V_1\) is between \(V_2\) and \(V_3\); when the left view \(V_2\) and top view \(V_4\) are used for training and right view \(V_3\) is used for testing (or the front view \(V_1\) and right view \(V_3\) are used for training and top view \(V_4\) is used for testing), the recognition accuracies are slightly lower. However, as shown in Table 8 (Sup.), our JEANIE is able to handle the influence of viewpoints and performs almost equally well on all 12 different view combinations which highlights the importance of jointly aligning both temporal and viewpoint modes of sequences.

Table 9 (Sup.) shows the experimental results on the NTU-120. We notice that adding more camera viewpoints to the training process helps the multi-view classification, e.g., using bottom and center views for training and top view for testing, and using left and center views for training and the right view for testing, and the performance gain is more than 4% on (100/same 100). Notice that even though we test on 20 novel classes (100/novel 20) which are never used in the training set, we still achieve 62.7% and 70.8% for multi-view classification in horizontal/vertical camera viewpoints.

4.8 Fusion of Supervised and Unsupervised FSAR

Recall that Sect. 3.5 defines two baseline and two advanced fusion strategies for supervised and unsupervised learning due to their complementary nature.

Tables 5 (Fusion), 6 (Fusion), 7 (Fusion), and 8 (Fusion) show that fusion improves the performance. The MAML-inspired fusion yields 5%, 5.1%, 4.2% and 9% improvements compared to the supervised FSAR only on NTU-60, NTU-120, Kinetics-skeleton and UWA3D Multiview Activity II, respectively. This validates our assertion that JEANIE helps design robust feature space for comparing sequences both in supervised and unsupervised scenarios.

The adaptation-based fusion (Adaptation-based) performs almost as well as the MAML-inspired fusion, within 1% difference across datasets. This is expected as MAML algorithms are designed to learn across multiple tasks, i.e., in our case the unsupervised reconstruction-driven loss and the supervised loss interact together via gradient updates in such a way that the unsupervised information (a form of clustering) is transferred to guide the supervised loss. The domain adaptation inspired feature alignment achieves a similar effect but the transfer between unsupervised and supervised losses occurs at the feature representation level due to feature alignment.

Training one EN with the fusion of both supervised and unsupervised FSAR outperforms a naive fusion of scores (Weighted fusion) from two Encoding Networks trained separately. Finetuning an unsupervised model with supervised loss (Finetuning unsup.) outperforms the weighted fusion.

Table 10 compares different testing strategies on fusion models. The MAML-inspired fusion achieves the best results, with 1.5%, 22.0% and 3.7% improvements when tested on supervised learning, unsupervised learning and a fusion of both. For both adaptation-based and MAML-inspired fusions, testing on unsupervised FSAR only (nearest neighbor on dictionary-encoded vectors) performs close to the results obtained from supervised FSAR only (nearest neighbor on feature maps), i.e., within 5% difference. The reduced performance gap between supervised and unsupervised FSAR suggests that the feature space of EN is adapted to both unsupervised and supervised FSAR.

5 Conclusions